No more FFmpeg installation!

Now, when you are rendering a video and don't have FFmpeg installed, Remotion will download a copy for you.

Previously, installing FFmpeg required 7 steps on Windows and took several minutes when using Homebrew on macOS.

When deploying Remotion on a server, you can now also let Remotion install FFmpeg for you using the ensureFfmpeg() API or the npx remotion install ffmpeg command. Learn more about FFmpeg auto-install here.

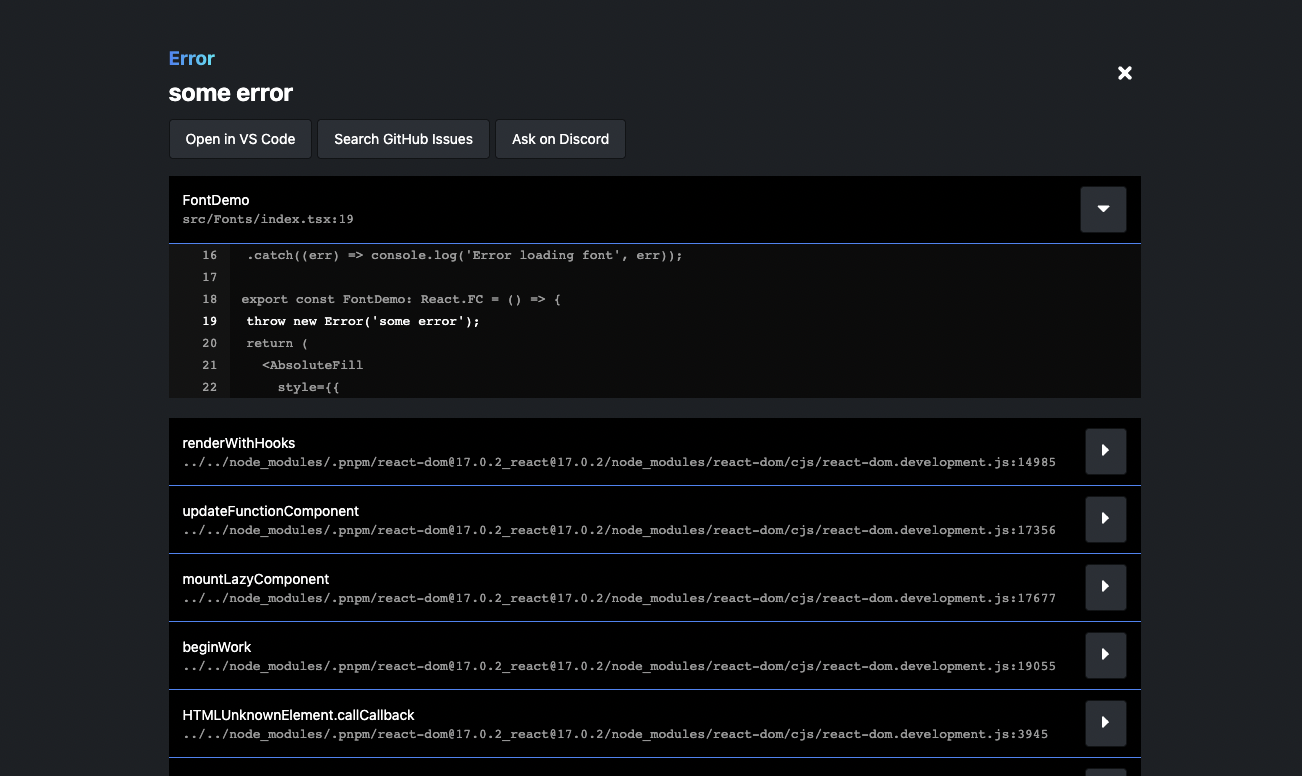

New @remotion/google-fonts package

It is now easy to import Google Fonts into Remotion! @remotion/google-fonts takes care of correct loading, and is fully type-safe!

New @remotion/motion-blur package

This package contains two components: <Trail> and <CameraMotionBlur>, assisting you with achieving awesome motion blur effects!

A quick demo of what is now called <Trail>:

New @remotion/noise package

This package offers easy, type-safe, pure functions for getting creative with noise. Check out our playground to see what you can do with it!

A video demo of how you can create interesting effects with noise:

New @remotion/paths package

This package offers utilities for animating and manipulating SVG paths! With 9 pure, type-safe functions, we cover many common needs while working with SVG paths:

Quick Switcher

By pressing Cmd+K, you can trigger a new Quick Switcher. It has three functions:

- Fuzzy-search for a composition to jump to that composition.

- Type

> followed by an item in the menu bar to trigger that action.

- Type

? followed by a search term to query the docs.

Remotion Core

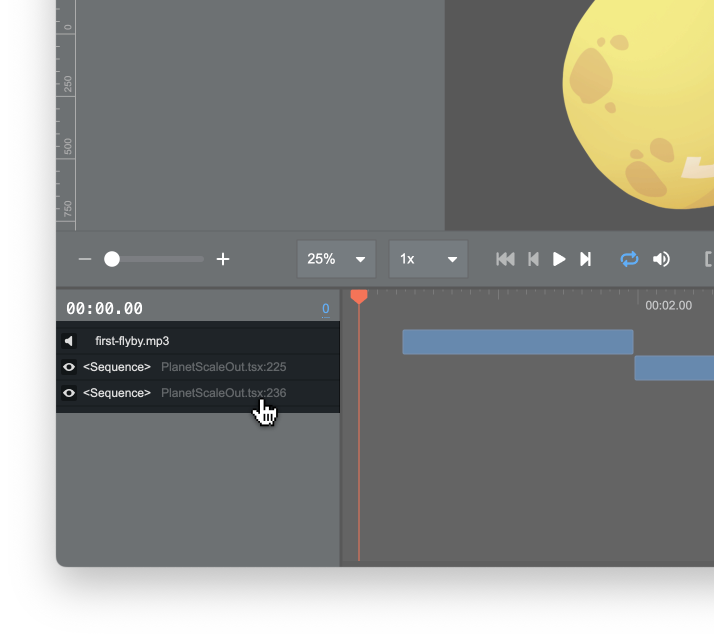

<Sequence> makes from optional, accepts style and ref

<Sequence from={0}> can now be shortened to <Sequence>. Our ESLint plugin was updated to suggest this refactor automatically.

You can now also style a sequence if you did not pass layout="none".

A ref can be attached to <Sequence> and <AbsoluteFill>.

Video and Audio support loop prop

The <Video> and <Audio> components now support the loop property.

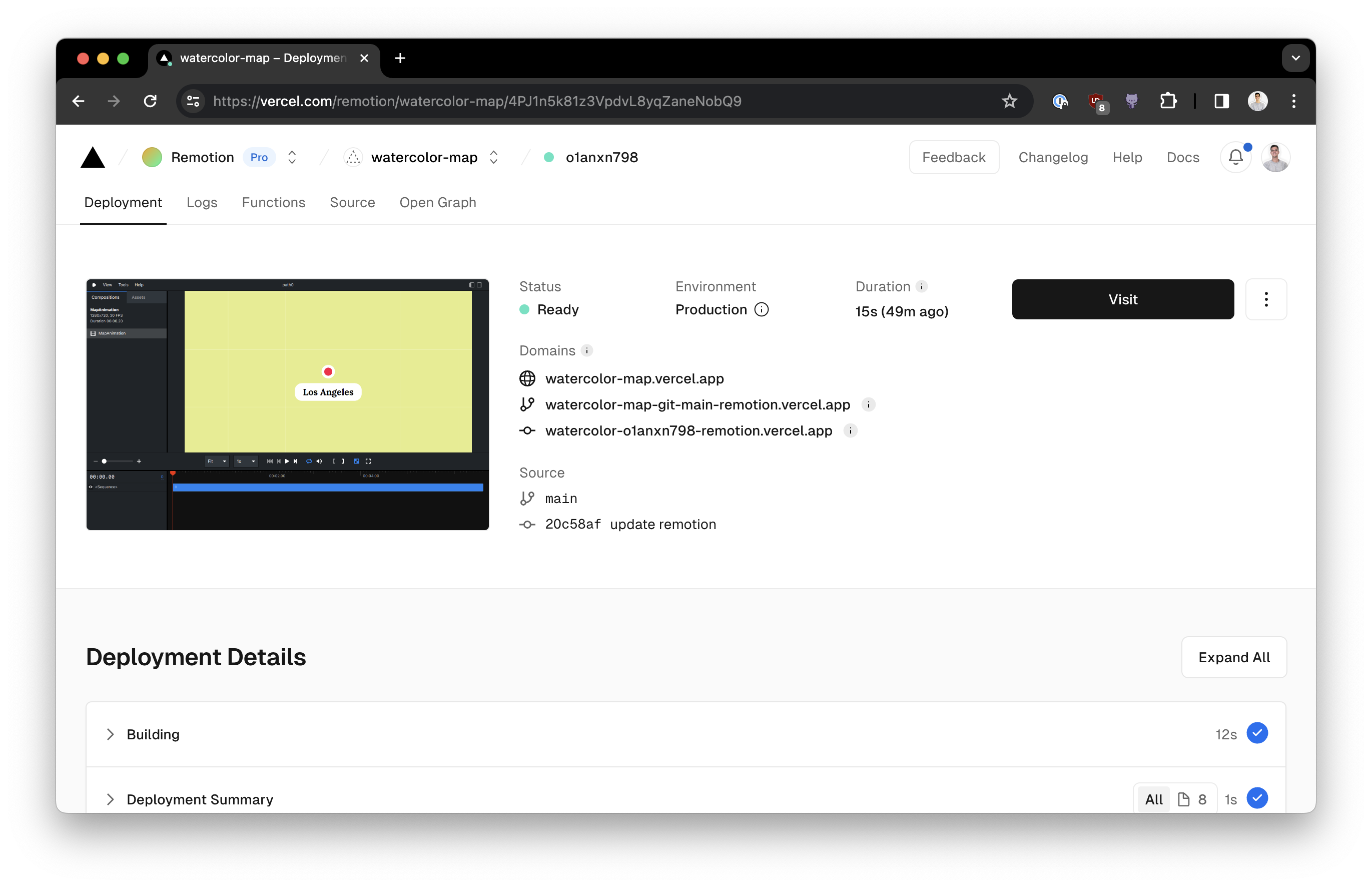

Preview

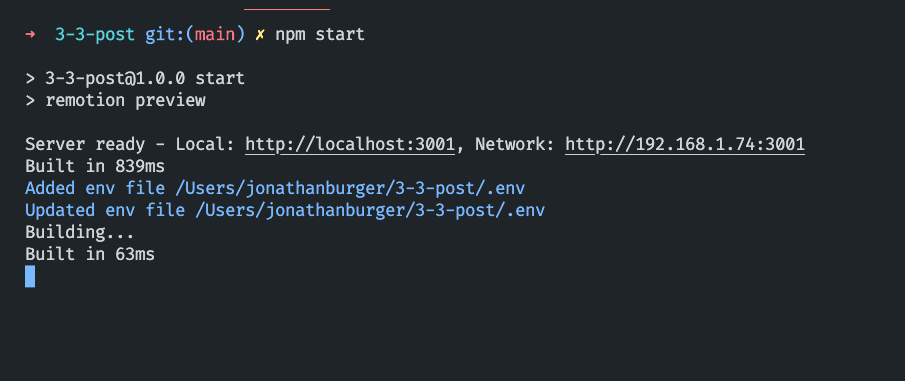

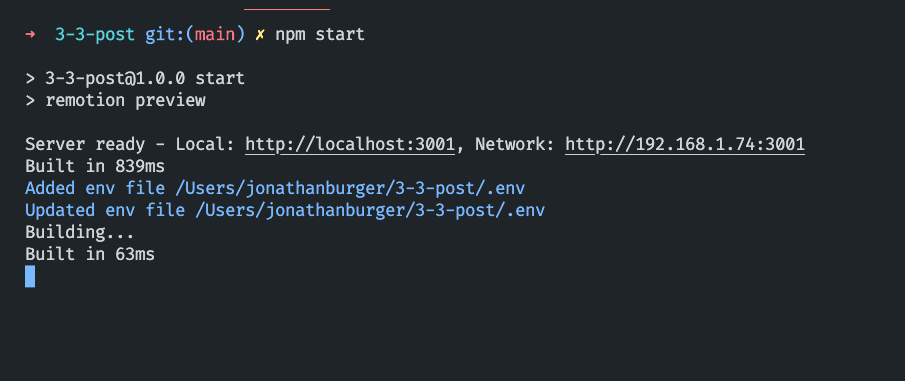

New CLI output

When starting the Remotion Preview, it now shows on which URL the preview is running. The Webpack output is now also cleaner.

Pinch to Zoom

If your device supports multitouch, you can now pinch to zoom the composition. Alternatively, you can hold Ctrl/Cmd and use your scrollwheel to zoom.

Using two fingers, you can move the canvas around and pressing 0 will reset the canvas. For the latter, there is also a button in the top-right corner that you can click.

Search the docs from the Remotion Preview

Pressing ? to reveal the keyboard shortcuts now has a secondary function: You can type in any term to search the Remotion documentation!

Shorter commands

Previously, a Remotion CLI command looked like this:

bash

npx remotion render src/index.tsx my-comp output.mp4

We now allow you to skip the output name, in this case the render would land in out/my-comp.mp4 by default:

bash

npx remotion render src/index.tsx my-comp

You can also omit the composition name and Remotion will ask which composition to render:

bash

npx remotion render src/index.tsx

Experimental: We might change the behavior to rendering all compositions in the future.

Finally, you can also omit the entry point and Remotion will take an educated guess!

If you deviate from the defaults of our templates, you can set an entry point in your config file and leave it out from Remotion commands.

Auto-reload environment variables

If you change values in your .env file, the Remotion Preview will reload and pick them up without having to restart.

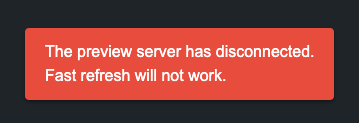

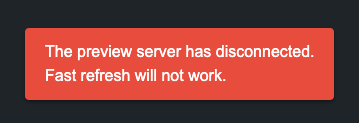

Signal that Remotion Preview disconnected

When quitting the Remotion Preview using Ctrl+C, for example to render a video, A new popup will signalize that Fast Refresh will not work anymore.

Rendering

--muted render

This new flag can be passed to a render to ignore the audio. If you know that your video has no audio, this can make your render faster.

--enforce-audio-track

When no audio was detected in your video, the audio will now be dropped (except on Lambda). With this new flag, you can enforce that a silent audio track is added.

--audio-bitrate and --video-bitrate

These flags allow you to set a target bitrate for audio or video. Those flags are not recommended though, use --crf instead.

--height and --width flags

Using these flags, you can ignore the width and height you have defined for your output, and override it. The difference to --scale is that the viewport and therefore the layout may actually change.

Obtain slowest frames

If you add --log=verbose, the slowest frames are shown in order, so you can optimize them. Slowest frames are also available for renderMedia() using the onSlowestFrames callback.

Negative numbers when rendering a still

When rendering a still, you may now pass a negative frame number to refer to frames from the back of the video. -1 is the last frame of a video, -2 the second last, and so on.

Override FFmpeg command

The FFmpeg command that Remotion executes under the hood can now be overriden reducer-style.

Server-side rendering

Resuming renders if they crash

If a render crashes due to being resource-intensive (see: Target closed), Remotion will now retry each failed frame once, to prevent long renders from failing on low-resource machines.

Previously, the progress for rendering and encoding was reported individually. There is a new field, simply named progress, in the onProgress callback that you can use to display progress without calculating it yourself.

Easier function signature for bundle()

Previously, bundle() accepted three arguments: entryPoint, onProgress and options.

Old bundle() signature

ts

import {bundle} from '@remotion/bundler';

bundle('./src/index.ts', (progress) => console.log(progress), {

publicDir: process.cwd() + '/public',

});

Since getting the progress was less important than some of the options, bundle() now accepts an object with options, progress callback and entryPoint altogether:

New bundle() signature

ts

import {bundle} from '@remotion/bundler';

bundle({

entryPoint: './src/index.ts',

onProgress: (progress) => console.log(progress),

publicDir: process.cwd() + '/public',

});

The previous signature is still supported.

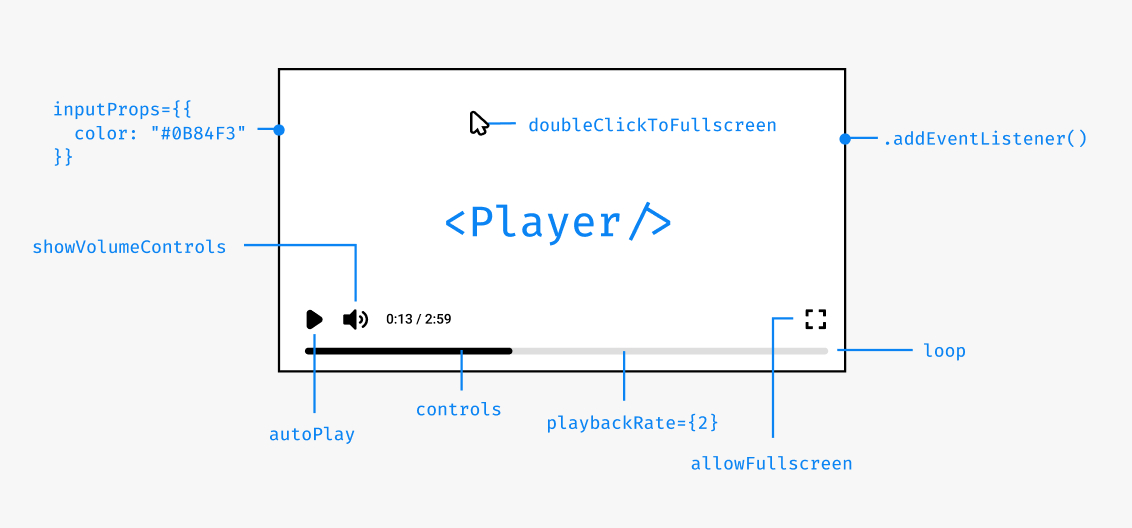

Player

<Thumbnail> component

The new <Thumbnail> component is like the <Player>, but for rendering a preview of a still. You can use it to display a specific frame of a video without having to render it.

tsx

import {Thumbnail} from '@remotion/player';

const MyApp: React.FC = () => {

return (

<Thumbnail

component={MyComp}

compositionWidth={1920}

compositionHeight={1080}

frameToDisplay={30}

durationInFrames={120}

fps={30}

style={{

width: 200,

}}

/>

);

};

Player frameupdate event

In addition to timeupdate, you can subscribe to frameupdate, which fires whenever the current frame changes. You can use it for example to render a custom frame-accurate time display.

Player volume slider is responsive

If the Player is displayed in a narrow container, the volume control now goes upwards instead of to the right, in order to save some space.

Get the scale of the Player

Using the imperative getScale() method, you can now see how big the displayed size is in comparison to the canvas width of the component.

Controls are initially shown

On YouTube, the video always starts with controls shown and then they fade out after a few seconds. We have made this the default behavior in Remotion as well, because users would often not realize that the Player is interactive otherwise. You can control the behavior using initiallyShowControls.

Play a section of a video

Using the inFrame and outFrame props, you can force the Remotion Player to only play a certain section of a video. The rest of the seek bar will be greyed out.

Using renderPlayPauseButton and renderFullscreenButton, you can customize the appearance of the Player more granularly.

Start player from an offset

You can define the initialFrame on which your component gets mounted on. This will be the default position of the video, however, it will not clamp the playback range like the inFrame prop.

New prefetch() API

In addition to the Preload APIs, prefetch() presents another way of preloading an asset so it is ready to display when it is supposed to appear in the Remotion Player.

Prefetching API

tsx

import {prefetch} from 'remotion';

const {free, waitUntilDone} = prefetch('https://example.com/video.mp4');

waitUntilDone().then(() => {

console.log('Video has finished loading');

free(); // Free up memory

});

Video and audio tags will automatically use the prefetched asset if it is available. See @remotion/preload vs. prefetch() for a comparison.

Remix template

The Remix template is our first SaaS template! It includes the Remotion Preview, the Player and Remotion Lambda out of the box to jumpstart you with everything you need to create your app that offers customized video generation.

Get started by running:

bash

yarn create video --remix

bash

pnpm create video --remix

Lambda improvements

Webhook support

You can now send and receive a webhook when a Lambda render is done or has failed. Examples for Next.js and Express apps have been added and our documentation page features a way to send a test webhook.

Payload limit lifted

Previously, the input props passed to a Lambda render could not be bigger than 256KB when serialized. Now, this limit is lifted, since if the payload is big, it will be stored to S3 instead being passed directly to the Lambda function.

Lambda artifact can be saved to another cloud

The output videos generated by Lambda can now be saved to other S3-compatible protocols such as DigitalOcean Spaces or Cloudflare R2.

Deleting a render from Lambda

The new deleteRender() API will delete the output video from the S3 bucket, which you previously had to do through the console or with the AWS SDK.

The following options are now optional:

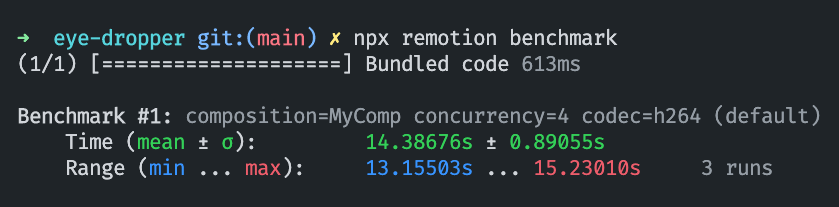

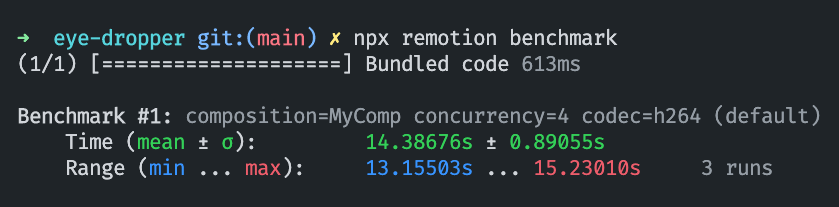

Benchmark command

The new npx remotion benchmark helps you compare different render configurations and find out which one is the fastest. Currently, you can compare different codecs, compositions and concurrency values. Each configuration is run multiple times in order to increase confidence in the results.

New guides

We have added new guides that document interesting workflows for Remotion:

We try to avoid jargon, but we have also created a Remotion Terminology page to define some commonly used terms. When using these terms, we will from now link to the terminology page for you to read about it.

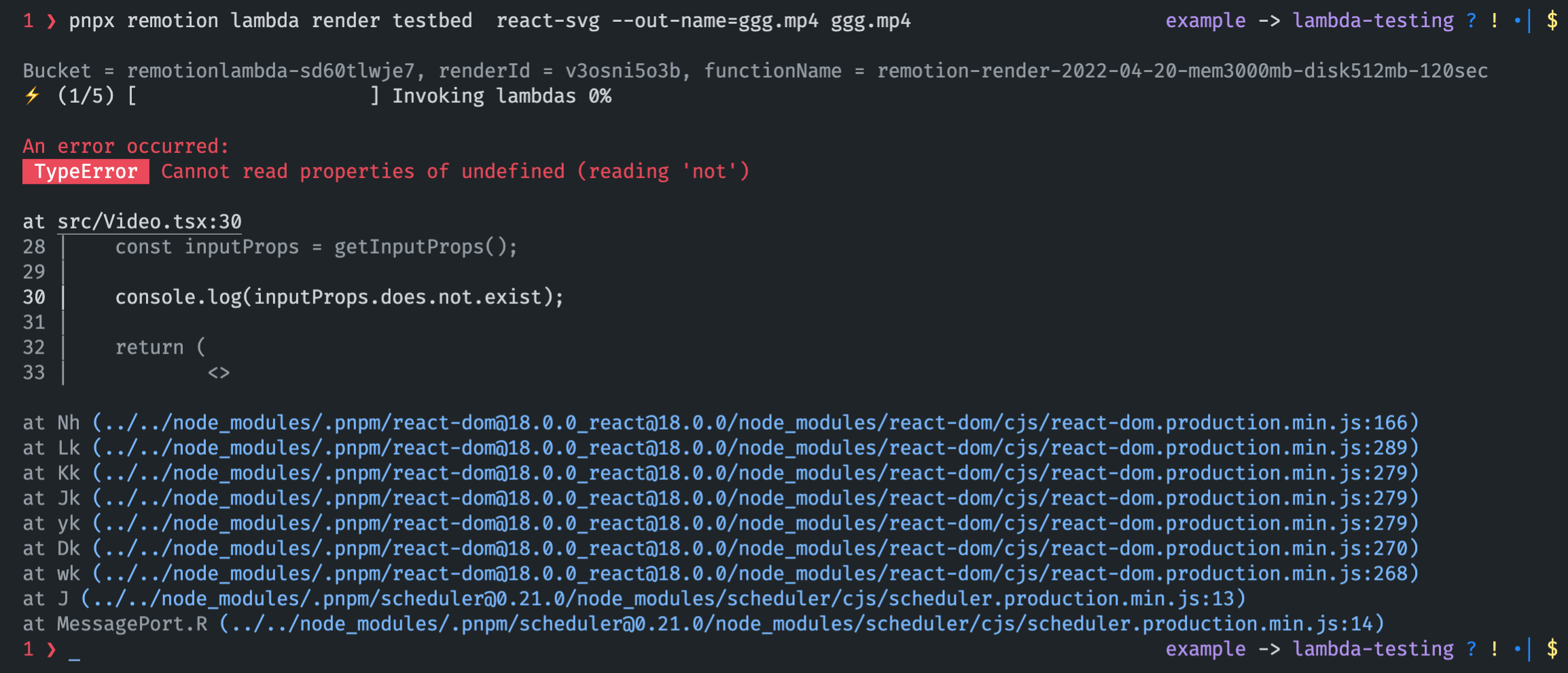

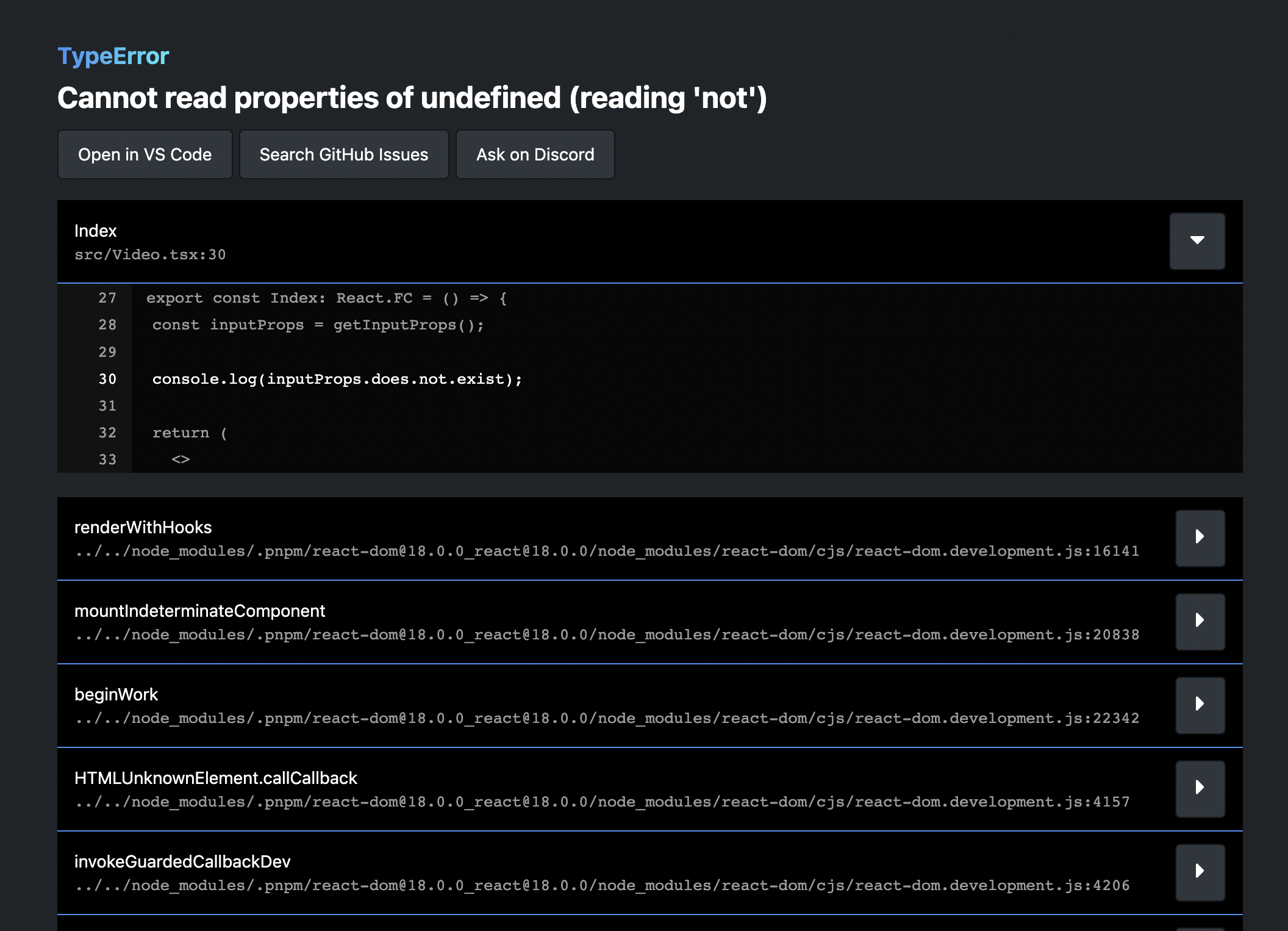

Better structure and naming in templates

The file that was previously called src/Video.tsx in templates is now called src/Root.tsx, because it did not contain a video, but a list of compositions. That component was also renamed from RemotionVideo to RemotionRoot. The new naming makes more sense, because that component is passed into registerRoot().

Notable improvements

Get the duration of a GIF

The new function getGifDurationInSeconds() allows you to get the duration of a GIF.

Lottie animation direction

Using the new direction prop, you can play a Lottie animation backwards.

Lottie embedded images

Should a Lottie animation contain an embedded image, it will now be properly awaited.

Temporary directory Cleanup

The temporary directory that Remotion creates is now completely cleaned up after every render.

Parallel encoding turned off if memory is low

Parallel encoding will not be used when a machine has little free RAM. You can also force-disable it using disallowParallelEncoding.

Thank you

Thank you to these contributors that implemented these awesome features:

- ayatko for implementing the

@remotion/google-fonts package

- Antoine Caron for implementing the

<Thumbnail> component, for reloading the page when the environment variables change and implementing negative frame indices

- Apoorv Kansal for implementing the documentation search in the Quick Switcher, the benchmark command and the option to customize Play button and fullscreen button in the Player

- Akshit Tyagi for implementing the

--height and --width CLI flags

- Ilija Boshkov, Marcus Stenbeck and UmungoBongo for implementing the Motion Blur package

- Ravi Jain for removing the need to pass the entry point to the CLI

- Dhroov Makwana for writing a tutorial on how to import assets from Spline

- Stefan Uzunov for implementing a composition selector if no composition is passed

- Florent Pergoud for implementing the Remix template

- Derryk Boyd for implementing the

loop prop for Video and Audio

- Paul Kuhle for implementing Lambda Webhooks

- Dan Manastireau for implementing a warning when using an Intel version of Node.JS under Rosetta

- Pompette for making the volume slider responsive

- Logan Arnett for making the composition ID optional in the render command

- Patric Salvisberg for making the FFmpeg auto-install feature

- Arthur Denner for implementing the

direction property for the Lottie component

Many of these contributions came during Hacktoberfest where we put bounties on GitHub issues. We also want to thank CodeChem for sponsoring a part of those bounties!